Nvidia is developing a new processor dedicated to inference computing, designed to enable AI models to respond faster to user queries. The announcement was made in the context of the March GTC conference, according to Mediafax, citing reporting from Reuters.

The new platform is set to be unveiled at the GTC developers conference in San Jose and will include a chip designed by startup Groq. The solution aims to enhance performance in tasks where response speed is critical, such as code generation and conversational applications.

Strategic partnerships and inference competition

Read also: Wayve secures Nvidia and Microsoft backing in $1.2 billion round, reaches $8.6 billion valuation

According to the cited information, OpenAI is not fully satisfied with the speed of current Nvidia chips for certain workloads, including software development. The new hardware could cover approximately 10% of the company’s inference computing needs.

Previously, OpenAI had held discussions with Cerebras and Groq to secure faster inference chips. Nvidia, however, signed a $20 billion licensing agreement with Groq, effectively ending those talks.

In September, Nvidia announced an investment of up to $100 billion in OpenAI, gaining an equity stake in the company along with access to advanced chips.

The move highlights intensifying competition in AI infrastructure, where optimizing inference performance is becoming as critical as training power.

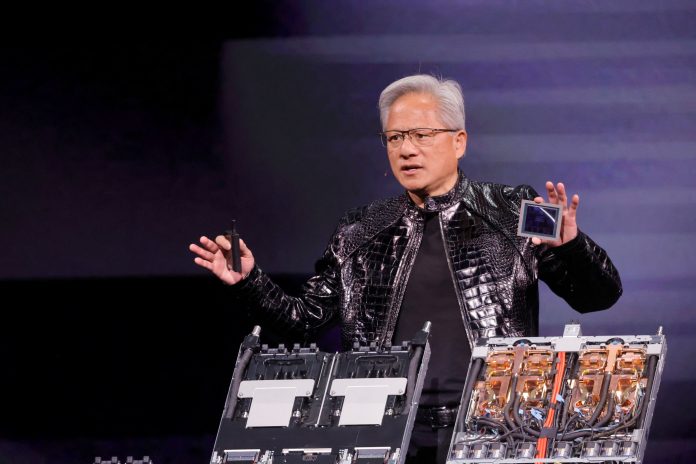

Photo: Daily Sabah